What Is Agentic AI? Autonomous AI Beyond Chatbots

Agentic AI explained: how it differs from chatbots, where it's used in 2026, key frameworks, and the challenges ahead.

When ChatGPT launched, most people understood AI as a thing you ask questions and get answers from. That mental model held up for a while. But through 2024 and 2025, something shifted. AI stopped just answering and started doing — planning multi-step tasks, using external tools, evaluating results, and adjusting course on its own. By 2026, frameworks like LangGraph (v1.0 GA), CrewAI (v1.10), and OpenAI Agents SDK have matured enough for production deployments.

The industry calls this Agentic AI.

How Is This Different from a Chatbot?

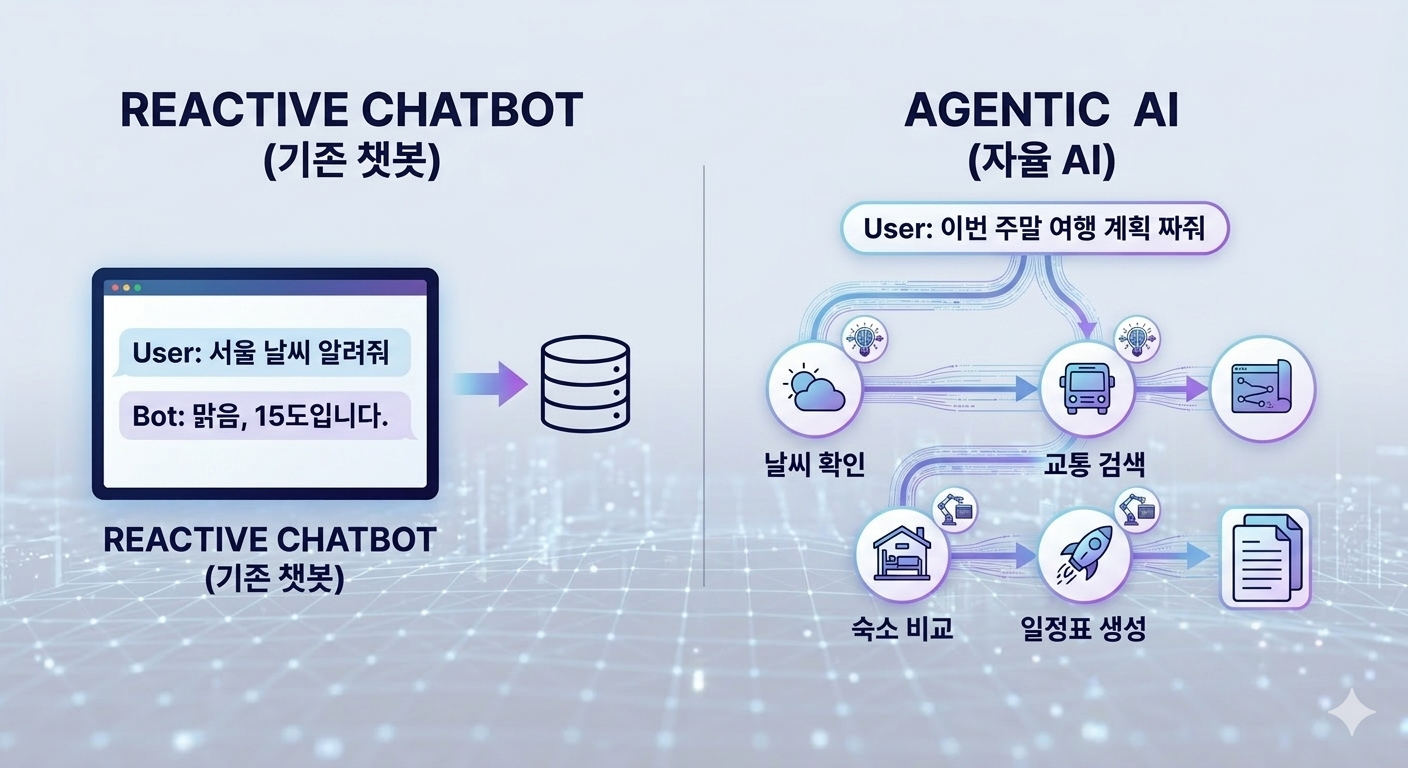

Traditional AI chatbots are reactive. You ask, they answer, done. They might remember previous messages in a conversation, but they never initiate anything on their own.

Agentic AI works differently. Give it a goal, and it breaks that goal into steps, executes each step, and adjusts its path based on intermediate results. If something fails, it tries a different approach. If it needs information it doesn't have, it calls a search tool to go find it.

A quick comparison:

- Chatbot: "What's the weather in Tokyo?" → "Sunny, 18°C"

- Agent: "Plan my weekend trip" → checks weather → searches transport options → compares hotels → generates an itinerary

The fundamental difference is that an agent runs through multiple steps to produce a result, rather than ending at a single question-answer exchange.

How Agents Actually Work

The core loop is plan → tool → observe → repeat. Rather than splitting this into abstract sections, here's a concrete scenario.

Someone gives a coding agent this instruction:

"Find the slow API endpoints in this project and optimize them."

The agent starts by planning. It examines the project structure, identifies API route files, and decides on an order for benchmarking. The key point: no human specified these steps. The AI figured out the approach itself.

Next, it uses tools. It reads files from the filesystem to analyze code, runs profiling tools in the terminal, and measures database query execution times. An LLM on its own only handles text — but connect it to tools and it can interact with the real world. The LLM acts as the brain that decides which tool to use and when.

Then it observes the results. The profiling output reveals an N+1 query problem on a specific endpoint. The agent interprets this and decides on a fix.

Finally, it acts on what it learned. It patches the code, runs tests, and if they pass, moves to the next endpoint. If tests break? It reads the error logs and tries again. This loop keeps running until the goal is met.

Memory plays a big role here too. "I fixed endpoint #3 by adding an index, so if I see a similar pattern, I'll try the same approach" — that kind of reasoning requires remembering past actions. Agents combine short-term memory (current task context) with long-term memory (user preferences, past history) to make smarter decisions over time.

Where Agentic AI Is Already Being Used

The most active area is coding agents. Claude Code, GitHub Copilot Workspace, and Cursor are the obvious examples. What they have in common is that they've moved past autocomplete. They analyze issues, modify code, run tests, and create PRs — handling entire development workflows. In 2024, the story was "AI writes code." In 2026, it's closer to "AI performs development tasks."

Customer support has made real progress too. Chatbots used to just look up FAQ answers. Now agents can query order systems, process refunds, and decide when to escalate to a human representative. They're handling actual business operations, not just responding to questions.

Data analysis and workflow automation are expanding fast as well. Ask an agent to "find anomalies in last quarter's revenue" and it can load data, run statistical analysis, create visualizations, and draft a report — all in one flow. Platforms like n8n and Make are connecting LLMs to automate email classification, document summarization, and scheduling.

Frameworks — What's Available

If you want to build agents yourself, you've got options. But each framework has a different philosophy, so picking the right one matters.

| Framework | Created By | Strength | Best For |

|---|---|---|---|

| LangGraph | LangChain | State-based graph for fine-grained flow control | Complex workflows with lots of branching |

| CrewAI | CrewAI | Multi-agent team collaboration | Role-based scenarios like "researcher → writer → reviewer" |

| Agents SDK | OpenAI | Lightweight, fast prototyping | Single agents, quick proof of concepts |

| Agent SDK | Anthropic | Safety and controllability by design | Claude-based agents, production safety |

If you're starting out, OpenAI Agents SDK or the Claude Agent SDK are good for getting your bearings. As projects get more complex, moving to LangGraph makes sense. CrewAI is worth considering when you have a clear reason for multiple agents working together — multi-agent isn't automatically better.

The Honest Downsides

Agentic AI can sound like magic, but reality is messier.

The biggest issue is error compounding. If an agent makes a bad call at step 3 of a 10-step plan, the remaining 7 steps go off the rails. With a chatbot, you can correct course on the next message. With an autonomous agent, by the time you notice the problem, it may have already done a lot of damage. That's why "human-in-the-loop" patterns — where the agent pauses for human approval at key checkpoints — have become essential.

Cost is real too. An agent might call an LLM dozens of times for a single task: interpret tool output → decide next action → call another tool → interpret again. For straightforward tasks, throwing an agent at them can be overkill. "Should this be an agent, or would a simple script do the job?" is a question worth asking every time.

Security can't be ignored either. If an agent can execute code, modify files, and call external APIs, the attack surface grows significantly. Prompt injection could potentially manipulate an agent's behavior. Modern frameworks are investing heavily in permission controls, sandboxing, and tool-call approval mechanisms for exactly this reason.

Where This Is Heading

The shift is from "asking AI questions" to "giving AI tasks." Many in the industry see this not as a passing trend, but as a fundamental change in how AI gets used.

That said, expecting agents to handle everything right now is premature. They still need supervision, and cost-effectiveness varies by use case. The practical move at this point is understanding what the technology is, and identifying where in your own work it would actually make a difference.

Agents are going to get smarter — that's just a matter of time. Getting familiar with them now puts you ahead of the curve.