Kubernetes Basics: Why You Need It and How to Start

Why Docker alone isn't enough, core Kubernetes concepts, and how to get a local cluster running in minutes.

Once you learn Docker, a question inevitably follows: "What happens when I have 10, 50, 100 containers?" Docker can spin up and tear down individual containers just fine. But when a service grows, you need containers to restart automatically when they crash, scale up when traffic spikes, and deploy without downtime. Doing all that manually doesn't scale.

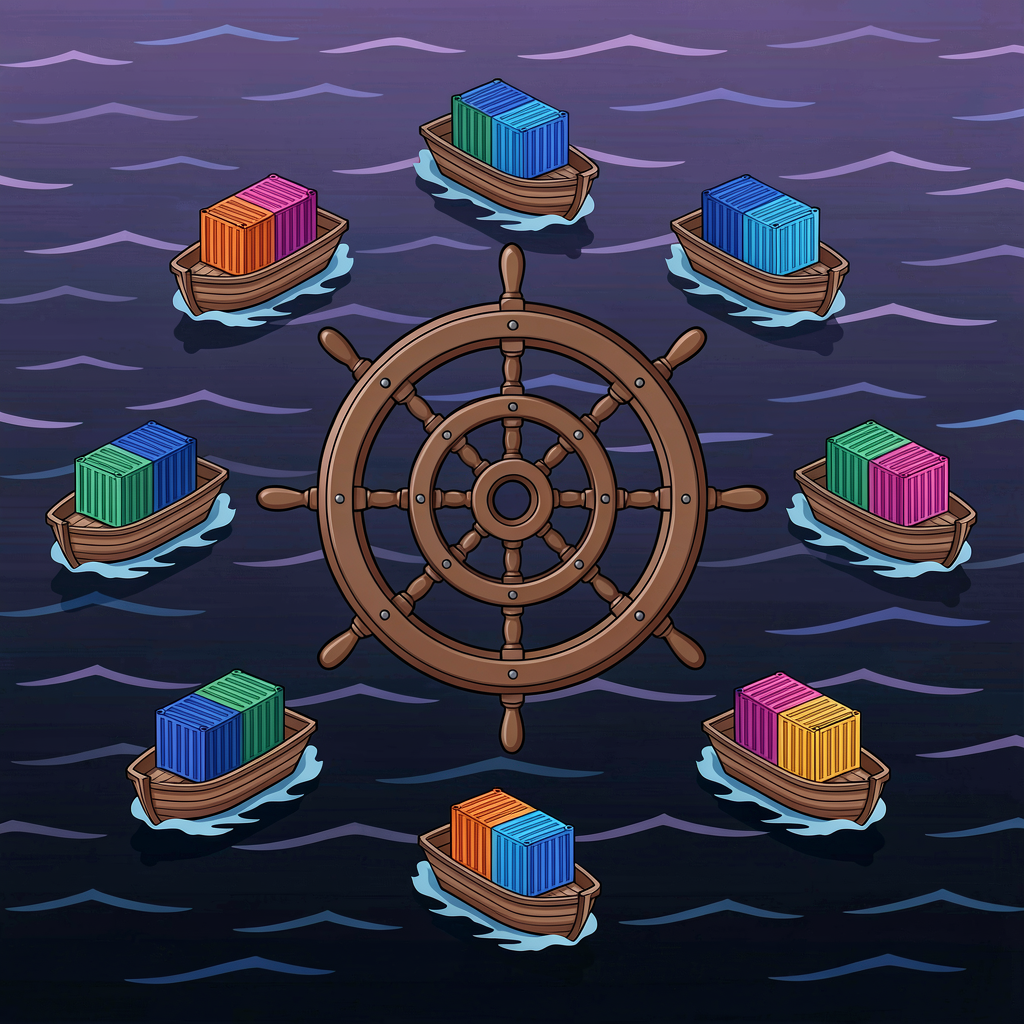

Kubernetes (K8s) solves this problem. It's a container orchestration system — an automated manager for your containers.

Why Docker Compose Falls Short

Docker Compose can manage multiple containers. For development or small services, it's perfectly fine. But production brings limitations.

No self-healing. If a container dies, you restart it yourself. The restart: always option handles container-level crashes, but if the host goes down, you're stuck.

Primitive scaling. docker compose up --scale app=3 adds instances, but there's no auto-scaling based on traffic. Load balancing needs separate configuration.

Single-host only. Docker Compose runs on one machine. Distributing containers across multiple servers isn't something Compose handles.

Kubernetes addresses all of these: automatic restarts, traffic-based auto-scaling, multi-node distribution, and zero-downtime deployments.

Architecture Overview

A Kubernetes cluster has two main parts: the Control Plane and Worker Nodes.

Control Plane

The brain of the cluster. It decides things like "run 3 replicas of this container" and "route traffic here."

Key components:

- API Server — All communication goes through here. When you run

kubectlcommands, they hit the API server - etcd — A distributed key-value store holding all cluster state. Which Pods run where, what the configurations are — everything lives in etcd

- Scheduler — Decides which node gets a new Pod based on available CPU, memory, and other constraints

- Controller Manager — Watches cluster state and reconciles the actual state with the desired state

Worker Nodes

The servers where containers actually run. Each node has:

- kubelet — An agent that takes instructions from the control plane and manages containers on that node

- kube-proxy — Manages network rules and routes traffic to the right Pods

- Container Runtime — Runs the containers. Docker used to be common here, but containerd is now the default

Core Concepts

Kubernetes has a lot of terminology. To start, you only need four things.

Pod

The smallest deployable unit in Kubernetes. Think of it as a wrapper around one or more containers.

"How is that different from a container?" Containers in the same Pod share networking and storage. They get one IP address and can communicate over localhost. In practice, most Pods contain a single container. Multiple containers per Pod are reserved for patterns like sidecars.

Pods are ephemeral. They can die and be recreated at any time, and their IP addresses can change. That's why you don't manage Pods directly — you use Deployments.

Deployment

A Deployment declares "keep 3 Pods running with this container image." If a Pod dies, it gets replaced automatically. Updates happen through rolling deployments, and rollbacks are built in.

apiVersion: apps/v1

kind: Deployment

metadata:

name: my-app

spec:

replicas: 3

selector:

matchLabels:

app: my-app

template:

metadata:

labels:

app: my-app

spec:

containers:

- name: my-app

image: my-app:1.0

ports:

- containerPort: 3000

This YAML says: create 3 Pods using my-app:1.0. If one dies, immediately create a replacement to maintain the count.

Service

Since Pod IPs change, how do other services find them? A Service gives a group of Pods a stable address (DNS name and IP) and distributes traffic across them.

apiVersion: v1

kind: Service

metadata:

name: my-app-service

spec:

selector:

app: my-app

ports:

- port: 80

targetPort: 3000

type: ClusterIP

This assigns the DNS name my-app-service to all Pods labeled app: my-app. Other services in the cluster can reach them at http://my-app-service, and traffic gets routed to a healthy Pod automatically.

Service types:

- ClusterIP — Internal access only (default)

- NodePort — Opens a port on each node for external access

- LoadBalancer — Provisions a cloud load balancer (AWS ALB, GCP LB, etc.)

Namespace

A way to logically partition a cluster. Useful for separating teams or environments (dev/staging/prod) within the same cluster. Prevents naming collisions and allows resource quotas.

The default Namespace is default. Small projects don't need multiple Namespaces, but they become valuable when running several services on one cluster.

Getting Started Locally

Setting up a production cluster is involved, but running one locally for learning is straightforward.

minikube

The most popular local K8s tool. It creates a single-node cluster on a VM or Docker.

# Install (macOS)

brew install minikube

# Start cluster

minikube start

# Check status

minikube status

# Open dashboard (web UI)

minikube dashboard

kind (Kubernetes in Docker)

Creates K8s nodes as Docker containers. Lighter than minikube and supports multi-node clusters, which makes it popular for CI/CD pipelines too.

# Install

brew install kind

# Create cluster

kind create cluster

# Delete cluster

kind delete cluster

Either way, install kubectl and you'll use the same commands to interact with the cluster.

Essential kubectl Commands

# Cluster info

kubectl cluster-info

# List nodes

kubectl get nodes

# Apply a configuration

kubectl apply -f deployment.yaml

# List all Pods

kubectl get pods

# View Pod logs

kubectl logs <pod-name>

# Shell into a Pod

kubectl exec -it <pod-name> -- sh

# Scale a Deployment

kubectl scale deployment my-app --replicas=5

# Delete resources

kubectl delete -f deployment.yaml

The core pattern is kubectl apply -f <file> — you declare the desired state and Kubernetes figures out how to get there. This is declarative management. You don't say "start 3 containers." You say "3 containers should be running."

ReplicaSet — Let Deployment Handle It

A ReplicaSet maintains a specified number of Pod replicas. But you almost never create one directly. Deployments create and manage ReplicaSets internally.

When you update a Deployment, it creates a new ReplicaSet, scales it up while scaling down the old one. That's how rolling updates work under the hood.

Know that ReplicaSets exist, but let Deployments manage them.

When Kubernetes Is Overkill

Kubernetes is powerful but complex. It's not always the right call.

Small services — If you're running a handful of containers, K8s overhead outweighs the benefits. Docker Compose or a single-server deployment works fine.

Small teams — Running Kubernetes requires infrastructure knowledge. A team of 2-3 people maintaining their own K8s cluster will spend more time on ops than development. Managed services like ECS, Cloud Run, or Vercel are more practical.

Serverless fits better — For irregular traffic and API-heavy workloads, Lambda or Cloud Functions can be a better fit. Zero infrastructure to manage.

Where K8s shines:

- 10+ microservices

- High traffic variability requiring auto-scaling

- Advanced deployment strategies (canary, blue-green)

- Multi-cloud or hybrid cloud requirements

Next Steps

Start with minikube or kind locally. Deploy a simple web app using a Deployment and Service. Once you understand Pods, Deployments, and Services, you've grasped about 80% of Kubernetes.

From there, branch out to ConfigMaps/Secrets (configuration management), Ingress (external traffic routing), PersistentVolumes (storage), and Helm (package management). Don't try to learn everything at once — pick up each concept when you actually need it.